The Project

The QoS feature of the SteelHead was being re-implemented and so it was due for a redesign. QoS is extremely complex and the design process was long. Through extensive UX research and design, I proposed a new mental model for QoS in the SteelHead. The engineering team involved liked it so much, they changed the underlying implementation to match the UX proposed mental model. Also, a new paradigm of mass configuration was introduced to SteelCentral Controller for this feature. The entire project was an incredible success.

Feature Research

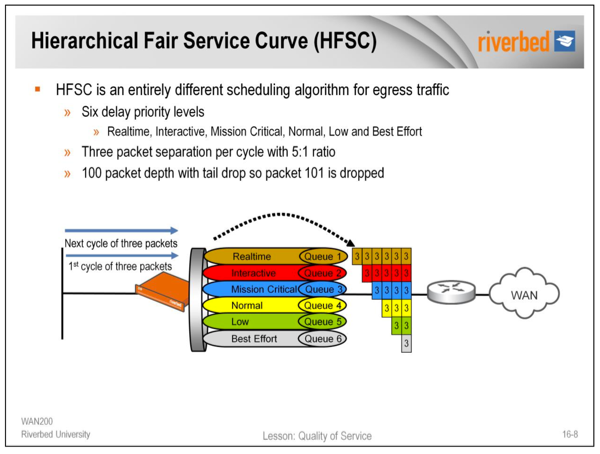

QoS is an enormously complex feature. The first step in the process was to fully understand how QoS works, what its intended to do, and what is needed to make it do that. I learned everything from the HFSC algorithm, QoS classes, deep packet inspection, latency priority, minimum and maximum bandwidth, etc. It got to the point where the questions I asked of the engineers couldn't be answered without looking into their source code.

User Research

After learning everything there is to know about how QoS works, it was time to speak with network administrators to see how they actually use it. I went onsite at several companies in Illinois, Montreal, and the UK. The user researcher and I also conducted remote interviews of many more firms. We learned what they used QoS for, what issues they had with the current version of QoS, and what they wanted to do with the QoS feature in the future.

Sites

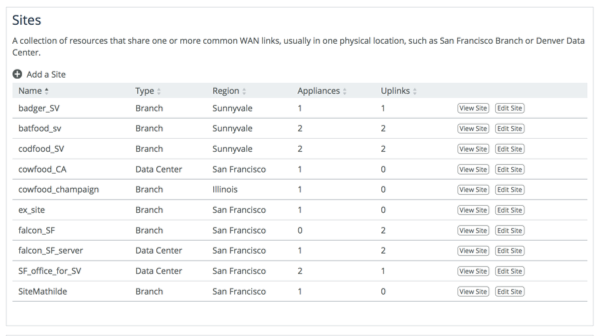

QoS needs to know the speeds of every network connection in the system. It needs to know this so it can adequately divide up resources. Network administrators have hundreds, or even thousands of locations. And each location can have multiple network connections. So I created an entire concept around this called 'Sites.' Each site has any number of uplinks (network connections) and each uplink has upstream and downstream bandwidths associated with them. This gives the QoS system the information it needs on bandwidth restrictions across the entire network.

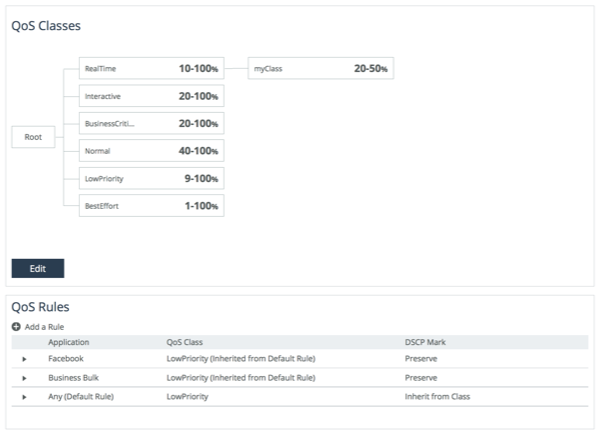

Classes

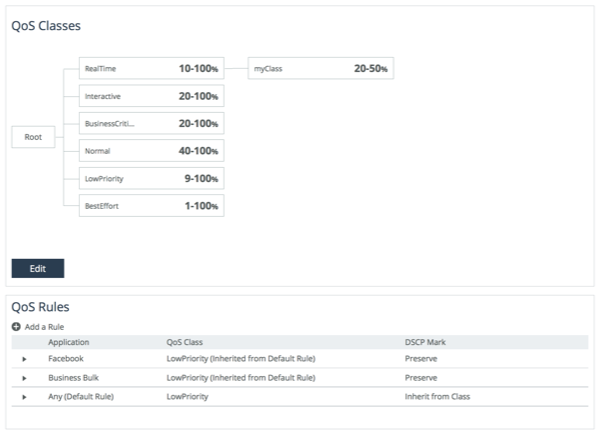

QoS systems usually have the concept of classes. Classes are sort of like buckets. The network administrator puts certain applications into certain buckets, then the system treats each bucket with a certain level of importance. The most important thing to remember about these buckets, is that giving one more resources, necessarily gives the others less. Also, putting all traffic into an important bucket may not be desirable. QoS works by prioritizing important traffic over less important traffic. If all traffic is marked as important, it can't do that. Concerns like this were common from network administrators. I attempted to produce UI's that could show some of this information in a visual way, but in the end, we settled for a fairly simple tree visualization. A few slides after this, there are some concepts I made for visually showing class information.

Rules

QoS classes are like buckets you put Apps into that then get managed by the QoS system. Rules are the mechanism by which the network administrator puts apps into the classes. Because SteelHead has incredibly powerful Deep Packet Inspection (DPI) technology. Specifying apps is very easy. Users can search for commonly known app names, as opposed to intricate IP address and port mappings. Also, our team pre-grouped the apps into about 8 groups. Each group contains approximately 800 applications. The rules can be specified with an individual app or an individual app group. This makes configuring QoS extremely fast.

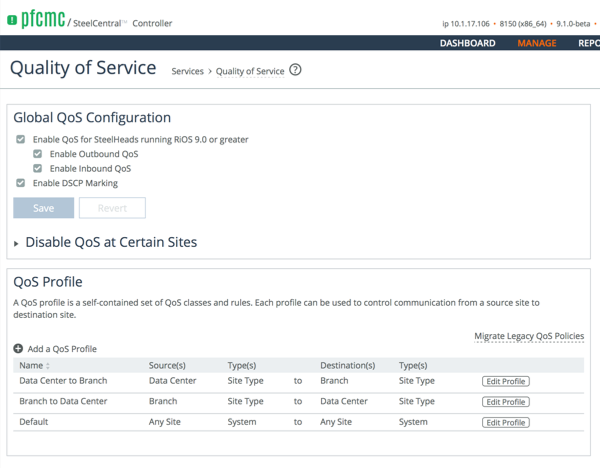

Profiles

QoS profiles are how you connect QoS Classes and Rules and Sites. The SteelCentral Controller has a birds-eye view of all the SteelHeads on a customer's network. We designed the profiles UI to take advantage of this extra knowledge. We did this by having the network administrator choose a source and destination site, or group of sites, that each profile applies to. With this information, the SteelCentral Controller knows how to distribute the QoS Profiles to all appliances automatically. This simplification makes QoS configuration far more declarative. This is something we strive for. We call it 'intent' based management. Where the network administrator specifies what they want, rather than how to make it happen. This is an incredibly powerful paradigm for the new QoS feature.

Experimentation

As with all products and features, not everything the design team makes, makes it into the product. Sometimes they're features that are not needed, sometimes they are too complex, and sometimes they are just way too cool. Below are some experiments that I made that never shipped.

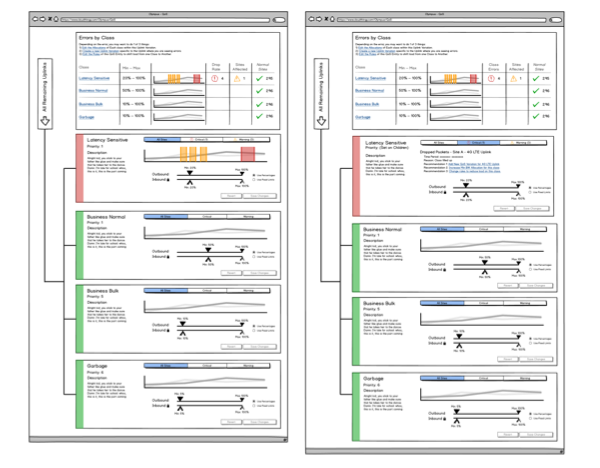

QoS Class Feedback

Setting the min and max bandwidth allocations for a QoS class is kind of a guessing game. Set the numbers and see how it goes. In order to alleviate this, I attempted to create some mockups with configurations that would advise the user of issues with the classes and tried to summarize their congestion over time in a visual way.

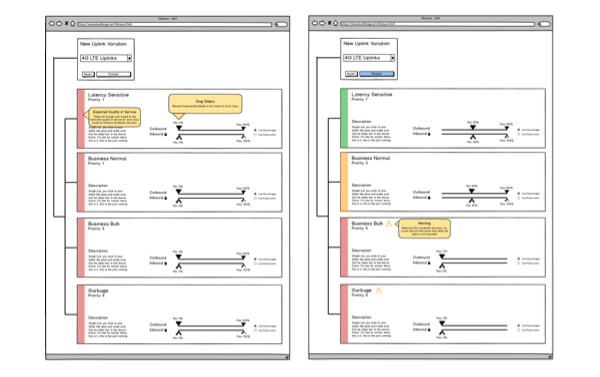

Uplink Variations

Uplink Variations are a system where the network admin could select different QoS Class min and max assignments based on the Uplink that was being used. This is important when one or more network connections fail at a site. In those conditions, critical traffic needs a higher priority.